From Artifact to Production: Integrating and Refining Lattice Cryptography Acceleration

This post follows on from the recent cross-post from our research collaborators at MPI-SP about their innovative design for ML-KEM and ML-DSA acceleration. Today, we’ll focus on what happened in between the researchers creating the initial implementation and now, when we have lattice cryptography support derived from their work in our production open-source silicon repository. For more on this collaboration with a higher-level and broader lens, look out for our upcoming joint talk at Real World Crypto in March!

We believe strongly that open-source projects and academic research reinforce one another. Open-source projects give researchers a realistic starting point for experiments so they don’t need to build everything themselves or reverse-engineer a blackbox product in order to publish papers. And in return, open-source projects can benefit from cutting-edge research being developed natively on their codebases.

Although research and open-source development can happen completely independently, we have found that early and frequent communication helps both sides. Researchers can offload non-novel, engineering-focused tasks to open-source developers and ask questions about the codebase. Developers can get advance insight about research directions and can share their project’s constraints and priorities to increase the odds of adoption. Finally, and perhaps most importantly for both sides, open-source developers can integrate and refine research artifacts and then include them into projects for real-world impact. In the case of the ML-KEM and ML-DSA collaboration, which introduced a significant amount of new code and features to an existing open-source codebase, this was a complex task that ranged from improving RTL design verification coverage to optimizing the memory and performance usage of the code.

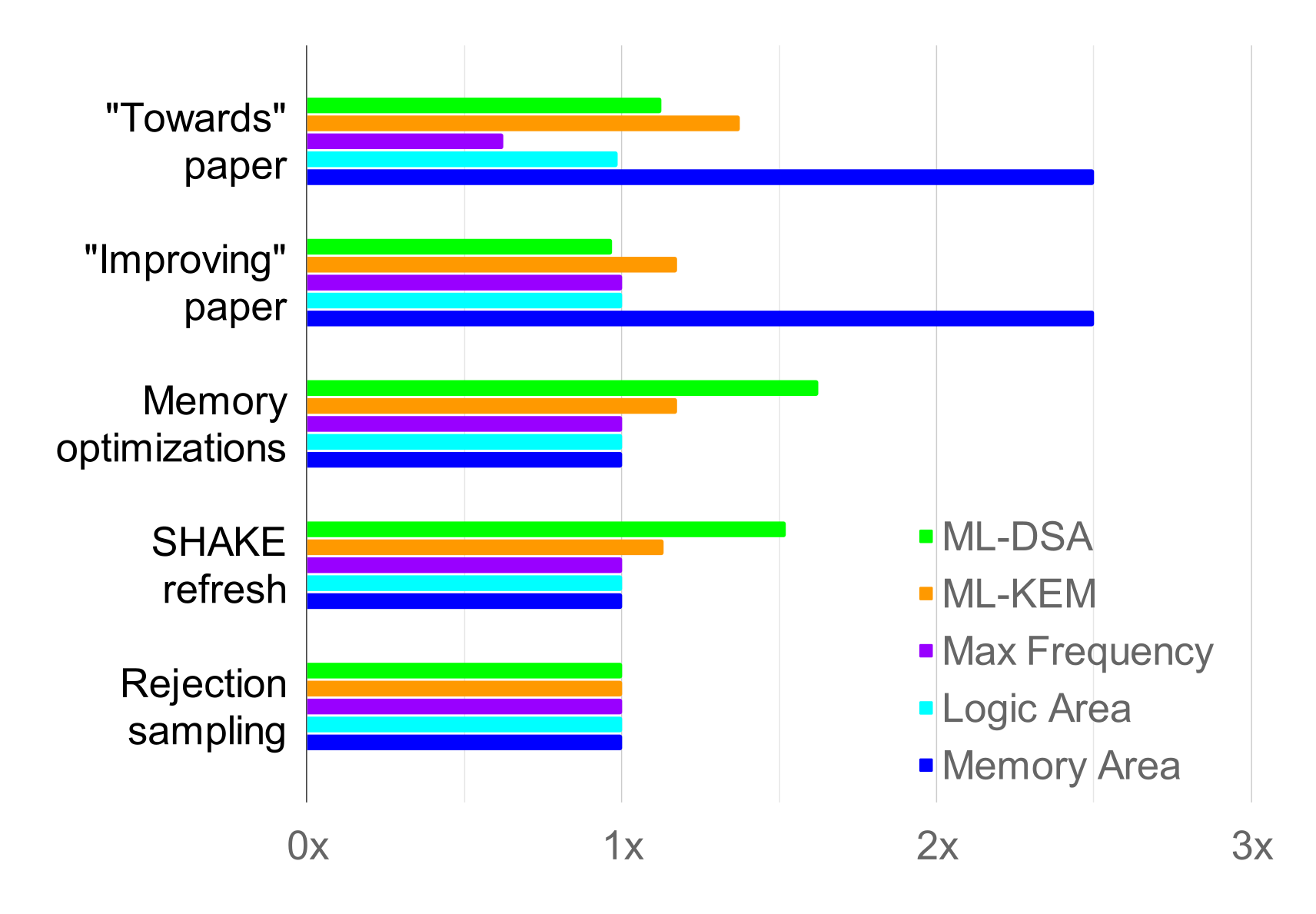

The following graph1 shows how the overall area and latency for the design improved over time as both ZeroRISC engineers and academic researchers worked on it:

Everything in the graph is normalized to the latest version, so it’s easier to see lots of very different metrics with different units in one chart. The “baseline” measurement is from the Towards ML-KEM and ML-DSA on OpenTitan2 paper, which evaluated an implementation of ML-KEM and ML-DSA on the base OTBN design, just with more area added to support the datatypes. You can see that initially, the latency for ML-KEM and ML-DSA was 8-10x higher than it is now! The Towards paper so dramatically improved the latency that it’s hard to read the graph with the baseline there. Here’s the same data but without the baseline measurement so the differences between other versions are clearer:

Following up from Towards, the subsequent Improving ML-KEM and ML-DSA on OpenTitan3 paper further improved latency for both ML-KEM and ML-DSA, and brought the maximum frequency up. After (or in fact, concurrent with) these vital contributions from academia, ZeroRISC engineers stepped in to refine the results. First we applied stack optimizations, which brought the memory area down at the cost of latency for ML-DSA. However, we were then able to mostly recover the latency costs and accelerate ML-KEM with improvements to the SHAKE hardware interface and both schemes’ rejection sampling routines.

In the rest of this post, we’ll go through each step of the optimization process and also discuss how we worked together with academic partners.

Collaboration setup

Before we could start any of the optimizations, it was clear that it would be helpful for both us and our academic collaborators to work on the same repository so we all had shared state. Since we would be doing our optimizations in parallel with new research, it was important to stay in sync and make sure the changes didn’t diverge too much.

We initially limited access to this repository to protect collaborators’ ability to publish and minimize the chance that partial results could be taken out of context. However, we also wanted future readers to have a faithful record of how the project evolved. Especially in security, keeping context like discussions of technical tradeoffs and the ordering of changes in the public record is a core advantage of open-source development. We therefore worked from a private repository initially, then changed the visibility to public after the new research was complete.

The results from the Towards ML-KEM and ML-DSA on OpenTitan paper had already been released as an artifact in a dedicated git repository. This code was based on a relatively old version of the OpenTitan codebase, so the first challenge was to make the changes work with a more recent version. Unfortunately, the artifact had erased the git history, so we needed to copy the code over and do manual surgery on it to make it run rather than rebasing.

Once the code was running, we set up some very basic checks to protect the main branch from breaking changes. With the basic infrastructure in place, we were ready to start optimizing the hardware and software.

ML-DSA stack optimization

The first thing we needed to do was improve the memory usage of ML-DSA. The initial implementation would have required the ACC coprocessor to have 128KiB of data memory, up from…4KiB in the original OTBN design. This would cost too much area for such a small embedded device, but luckily we were not the first to address this problem; there was substantial existing research in papers like Dilithium for Memory Constrained Devices4 and Compact Dilithium Implementations on Cortex-M3 and Cortex-M45, as well as open-source implementations like pqm4. Another resource consulted during this memory optimization process was Warp Drive Engineering6, Amber Sprenkels’s PhD thesis, which overlaps with the other two papers.

Following these references, we experimented with different memory optimizations. This table summarizes their effect on the stack needed for ML-DSA-87 signing, the most memory-hungry operation:

| optimization | new stack size (bytes) | change (bytes) | approx. slowdown |

| Stream matrix A | 56672 | -64160 | 125% |

| Stream y | 49504 | -716 | 3% |

| Stream s1, s2, t0 | 19808 | -29696 | 14% |

| Compress w1 | 11872 | -7936 | 2% |

| Accumulate y | 10848 | -1024 | 0.1% |

As you can see from the table, most of the performance gains come from streaming various values. The ML-DSA signing procedure is basically a big rejection loop that keeps looping until it generates a valid signature, so naturally most performance-sensitive implementations compute as much as they can before the loop and then keep it live until the operation is complete.

However, these values can be huge. The matrix A, for example, is a k × l matrix where each entry is a polynomial of 256 24-bit coefficients each. Vectorized implementations like ours will probably store each coefficient in a 32-bit slot, for a round 1024 bytes = 1KiB per polynomial. Depending on the parameter set, the matrix has 16-56 entries, so that means a whopping 56KiB for ML-DSA-87, just for A!

In a memory-optimized implementation, we trade off performance for space by recomputing A again from its seed on every iteration of the sign loop. We never need to keep the entire matrix in memory; we stream it as we perform a matrix-vector multiplication and store only the resulting vector. The matrix expansion runs on SHAKE operations, and helpfully we have a hardware SHAKE to help minimize the cost of recomputation. A 125% slowdown sounds rough until you see that Compact Dilithium Implementations reported 234-289% slowdowns for the same optimization on Cortex-M4!

While it’s possible to reduce ML-DSA-87 all the way down to 8kB of stack based on the papers we referenced, further memory optimizations would also come at a performance cost and 11kB is low enough for our initial requirements. Accounting for I/O buffers, constants, and expected overhead from masking, we think that this amount of stack reduction will be sufficient for our goal of fitting first-order-masked ML-DSA-87 in 32KiB. We may need a few additional smaller adjustments, for example to how we store compile-time constants, but we’ll reserve those for when we have more precision on exactly how much space we need to make.

In the benchmarks we ran for the chart at the top of this post, we see overall slowdowns for memory optimizations of 82%, 84%, and 138% for ML-DSA-44, -65, and -87 signing respectively7. This is surprisingly good; in our blog post a little over a year ago we expected 200-400% slowdowns. Part of that difference is because we didn’t have to implement the most extreme optimizations, and part of it is because of architectural differences between ACC and Cortex-M4.

We later applied a subset of the same memory optimizations to key generation and verification operations. It’s easier to optimize these than signing; while the signing procedure is a loop that needs most values to remain live until the end of the computation, key generation and verification generally process each value only once. For the same reason, the performance cost of memory optimizations were much smaller on key generation and verification: about 0.1-0.4%.

Hardware and design verification integration

We concluded from our memory optimization experiments that 32KiB each of data and instruction memory for the coprocessor will be sufficient for first-order-masked ML-DSA-87. That still required a hardware change to update from 8KiB of instruction and 4KiB of data memory in the original design. To support ML-KEM and ML-DSA acceleration on ACC, a vector ISA extension, new adder, new multiplier, and miscellaneous control/datapath registers like unique WSR/CSR registers were also added.

It became evident that this configurability of ACC should be expanded to the RTL itself. To accommodate for the differing design constraints the ML-KEM and ML-DSA capabilities were controlled behind the AccPQCEn SystemVerilog parameter. The instantiation of the vectorized adder, multiplier, and PQC unique datapaths are contingent on the usage of the AccPQCEn parameter. As a result, we can eliminate the additional hardware overhead required for ML-KEM and ML-DSA on ACC when the PQC algorithms are not desired.

Using SystemVerilog parameters has a considerable impact on the design verification (DV) and coverage efforts. The original ACC simulation config was turned into a base configuration to specify the DUT being tested, alongside testcases and common simulation environment variables. A pair of configurations were created to inherit the base, while each extended their appropriate AccPQCEn parameter value and DV dependencies. The testing infrastructure relies on the generation of randomized instructions, often organized into a subset of groups referred to as a snippet. These snippets cover the entire range of instructions, from straight-line instructions like arithmetic, to conditional instructions like branches or jumps. The more complex snippet generators include illegal instructions aimed at verifying ACC behavior, including bad loops, or an illegal CSR/WSR address. The result of snippet generation is an assembly test program to run on both ACC and accsim, a specialized simulator of ACC’s expected behavior, for comparison.

In order to collect coverage metrics for ACC, we needed to expand the existing snippet infrastructure. Firstly, we expanded the straight-line instruction snippet to include the new vector ISA instructions. For coverage, we expanded the set of covergroups in order to capture the new set of instructions and instruction encodings. The introduction of new CSR/WSR registers also constituted an expansion of the related covergroup, with appropriate bins and crosses based on the parameter value. At the same time, we updated the bignum_insn.yml for ACC to include a flag on all instructions only supported by the AccPQCEn parameter. This allowed us to dynamically adjust the list of valid and illegal instructions generated for each test case. As previously mentioned, accsim is an ISS model of ACC and runs in lockstep to compare instruction execution, processor state, and register values. The IP and DV environment parameterization was also extended to the simulation model.

In addition to the new instruction datapaths, we introduced a side-load interface connection between KMAC and ACC. Within the DV environment, a UVM agent was created for the KMAC interface to respond to hash requests generated by ACC, and drive the appropriate digest response. Generating KMAC requests in ACC requires a sequence of instructions to be executed in order to write to the appropriate WSR/CSR registers. This behavior constituted a more complex snippet generator, in order to evaluate coverage metrics like line, toggle, and conditional etc. The generator works by creating a sequence of instructions to configure the hashing mode into SHA3/SHAKE with different drive strengths and creating a variable length message. The end of the snippet attempts to read the digest from KMAC, and for XOF’s a variable number of reads in order to exhaust the Keccak state. Included in this testing is the ability to generate oversized and undersized message payloads to KMAC in order to test ACC’s response.

Improved multiplier and adder

As we were making the above changes, Ruben Niederhagen at Academia Sinica and Hoang Nguyen Hien Pham at MPI-SP made further progress on the accelerated ISA design. In their paper Improving ML-KEM and ML-DSA on OpenTitan, they adjust the original ISA to remove the vectorized modular multiply instruction and replace it with an updated non-modular vector multiply that has additional modes to make software modular reduction faster. This resulted in better throughput overall for the multipliers, reducing cycle counts for top-level ML-KEM and ML-DSA operations up to 17%. Further adjustments they made to the vector adder improved the design’s maximum frequency dramatically, by 36-75% depending on the ASIC or FPGA toolchain. Despite all of these latency improvements, area is hardly affected, with their paper’s two ASIC targets showing either a 3% decrease or a 6% increase in area.

Of course, given the impressive results, we wanted to integrate these new changes. As a result of frequent communication and a shared development repository, we knew to expect the update and the process was straightforward.

We also put the researchers in contact with Andrew “bunnie” Huang and Robert Schilling, who were able to run the designs through additional synthesis pipelines. This allowed the researchers to better understand how the design performed with different synthesis tools, as well as giving us at ZeroRISC early feedback on how the design might work for our customers and what integration work we might need to plan for.

KMAC interface improvements

As we integrated the results from the Towards and Improving papers, we made some tweaks to the KMAC/ACC interface as well. In the original implementation from Towards, software sets the length of a SHAKE/SHA3 input at the start of the computation and then repeatedly writes to the kmac_msg register. The KMAC block reads all bytes from each write until the expected length is reached. However, sometimes ML-KEM and ML-DSA hash multiple concatenated values of different lengths, and it’s not convenient (especially when memory is tight) to copy everything into a single buffer. For this reason, we added a 32-bit CSR register kmac_partial_write. Writing to the register applies a byte mask to the next word written to the kmac_msg_data WSR register. After applying the partial write value the kmac_partial_write CSR clears itself until written to again. This makes it easier to send multiple values to KMAC without copying, improving performance and providing greater flexibility.

We also reduced the frequency of ACC stall cycles attributed to the KMAC interface. Attempting to read a digest with the bn.wsrr instruction that is not yet ready will intentionally incur a stall until KMAC is finished. The masked Keccak implementation in KMAC requires 4 cycles per round, with additional clock cycles for the ingress and egress of data. For the Keccak XOF’s used by ACC, there is an egress overhead of 3 clock cycles to shift out the new digest so long as the Keccak state is not exhausted. ACC uses two new interface ports, next and hold, to assert control over KMAC during XOF computations. The hold signal is used to maintain exclusive control of KMAC for extended periods as next is asserted by ACC whenever a new 256-bit digest is requested. Originally, the next signal’s assertion was bound to the bn.wsrr instruction execution when starting a digest read. This was guaranteed to incur a stall for the length of the data shift period on every read.

To combat this stall we implemented an eager refresh of the digest. We recognized that in an ideal scenario we perform intermediate operations on ACC while a new digest is being loaded. To accomplish this, the next signal assertion was coupled to the previous digest read. In doing so we are able to speculatively request the next digest from KMAC, reducing the maximum stall cycles per read by 1. This change already had a noticeable impact on latency for ML-DSA and ML-KEM (about 5% and 4% overall, respectively). The larger performance improvement comes from the fact that a new digest read request from bn.wsrr is not strictly necessary to load a value to ACC. This opened the option to improve software optimizations by executing intermediate operations between digest loads, and remove the guaranteed KMAC stalls.

Rejection sampling speedups for ML-DSA

One of the most time-consuming steps in all ML-DSA operations is the sampling of the matrix A. Each polynomial in the matrix must be separately expanded from a seed value using the SHAKE XOF. The expansion consists of sampling 3 bytes at a time from the SHAKE output, clearing the high bits and rejecting all inputs that are still greater than the 23-bit modulus q = 8380417 until we sample 256 valid coefficients. Even with hardware acceleration for the actual SHAKE computations, this operation took 40-60% of cycles for all top-level ML-DSA operations!

The first opportunity we found to speed up the rejection loop was to vectorize the sampling routine. Since q is actually quite close to 223, rejections during ML-DSA sampling are rare. A random 23-bit number has a 99.9% chance of being within the valid range. So we wondered – since coefficients were discarded so rarely, could we push complexity out of the hot loop and into specialized discard logic that would run only rarely? The answer was a resounding yes.

Thanks to the fast vectorized ISA we have from the Towards paper, we can sample, unpack, and store candidate coefficients several at a time. Only once we assembled 256 coefficients speculatively into a polynomial did we actually check the bounds. Again taking advantage of the fact that rejection is rare, the bounds-check is vectorized; we subtract the modulus from 8 coefficients at a time and mask out the bits that will be set if the subtraction underflowed (i.e. the modulus is greater than the coefficient). Then, we can check the zero flag to see if all 8 coefficients are in bounds, which is true 99.2% of the time on average.

In the rare case that there is an invalid coefficient in the vector, we run a specialized discard routine to locate the bad coefficient, shift the whole polynomial one place, and then sample a new coefficient at the end. This discard routine is comparatively slow, but it’s so rare that it doesn’t matter; the speedup we observed from this initial vectorization was 20-40% across each top-level ML-DSA operation.

We were then able to gain further speedups by taking advantage of the KMAC hardware tweaks. Since it’s predictable when the SHAKE digest will need to wait ~100 cycles to refresh, we could unroll the loop and use time when we’d otherwise stall to precompute the check for bad coefficients, keeping track of the index of the first out-of-bounds coefficient. Most of the time (~78% of polynomials), there will be no bad coefficients at all and we can skip the check-and-discard logic entirely. Otherwise, we can immediately know where to start the discard process. We could also send the input to KMAC a bit early to avoid stalls before the first digest was ready. These further improvements brought us to an overall 52% speedup for top-level ML-DSA operations.

Rejection sampling speedups for ML-KEM

Like ML-DSA, we also found that core ML-KEM operations were bottlenecked by rejection sampling. Prior to our optimizations in this section, the introduction of eager KMAC refreshing and a carefully optimized NTT routine had already eliminated the other typical bottlenecks one might typically find in an ML-KEM implementation: benchmarking ML-KEM-512 revealed that the poly_gen_matrix routine, which generates the public matrix ÂT during encryption, contributed around 35-40% of cycles to decapsulation.

Recall that in ML-KEM, the ÂT matrix is a k × k matrix, where k = 2, 3, 4 for ML-KEM-512, ML-KEM-768, and ML-KEM-1024 respectively. Each entry of this matrix is deterministically sampled from a public key by passing it through SHAKE-128 just like with ML-DSA.

Digging into poly_gen_matrix’s performance, we found that processor stalls were a significant contributor to latency, representing 25% of the overall cycles spent in poly_gen_matrix. These stalls weren’t a result of waiting on the KMAC engine (there were sufficient delays between KMAC calls that only the initial SHAKE squeeze resulted in a stall) but instead arose from branching in the core sampling logic. Indeed, as part of defense against Spectre-style attacks, the ACC is designed to always require two cycles per branch regardless of whether a branch is taken or not. While this is necessary for security, it also means that during branching-heavy code such as a rejection sampling loop, the ACC can spend a significant amount of time in processor stalls.

We might be tempted to try to employ the same eager sampling trick that worked for ML-DSA. One crucial detail with ML-KEM, however, is that the rejection sampling routine rejects coefficients at a much higher rate than ML-DSA does. ML-KEM coefficients are sampled modulo q = 3329 by sampling 12-bit integers, meaning that a candidate coefficient will only be accepted with probability 3329/212 ≈ 0.813..., or about 81.3% of the time. As such, the technique used to optimize ML-DSA’s poly_uniform won’t work, as too many incorrect samples would accrue to handle efficiently post-hoc.

Since we couldn’t amortize rejection handling costs as in ML-DSA, we had to find a way to eliminate stalls while encountering new candidate coefficients. Originally, the core of the rejection sampling logic in _poly_uniform_inner_loop would review 20 coefficient candidates in the wide register shake_reg containing the latest SHAKE-128 output. Accepted coefficients needed to be stored in memory at 16-bit boundaries to leverage vectorized instructions later, so an accumulator wide register was used to hold 256 total bits/(16 bits/coefficient) = 16 coefficients and an accumulator_count register was used to track when the accumulator register was full and needed to be written out.

(coefficients in accumulator rejection sampled from shake_reg)

┌────┬────┬────┬────┬────┬────┬────┬────┬── ──┬────┬────┐

│0aaa│0bbb│0ccc│0ddd│0eee│0fff│xxxx│xxxx│x ... x│xxxx│xxxx│ accumulator

└────┴────┴────┴────┴────┴────┴────┴────┴── ──┴────┴────┘ (wide register)

0 1 2 3 4 5 6 7 8 14 15

▲

│ └─ accumulator_count = 6

(written out (general purpose register)

│ to memory

once full)

▼

|----|----|----|----|----|----|----|----|- ... -|----|----|- ... coefficient buffer

▲ (ACC memory)

└─ write-out memory pointer

(general purpose register)The full process for the core rejection sampling loop initially looked like the following (see here for the implementation):

- For 20 times:

- (Branch #1) If our write-out memory pointer is past the last valid address, jump to (*) to skip further sampling

- Fetch a 12-bit candidate coefficient

candby masking the least significant bits of the SHAKE-128 output - Compare

candto q = 3329 - (Branch #2) If the carry bit isn’t set (i.e. 3329 <=

cand< 4096), jump to (*) to reject the candidate - Shift

candintoaccumulatoraligned to a 16-bit boundary - Add 1 to

accumulator_count - (Branch #3) If

accumulator_count< 16, jump to (*) to skip writing out to memory - Write

accumulatorout to memory and increment the write-out memory pointer - Reset

accumulator_countto 0 to indicate that theaccumulatoris empty - (*) Shift the SHAKE-128 output right to discard the 12 least significant bits

- Return to rest of

poly_gen_matrix

The lines labeled “Branch #1,” “Branch #2,” and “Branch #3” were where the majority of branch stalls arose from, so these were the lines that were targeted. Our first observation was that Branch #1–the branch signaling that poly_gen_matrix had already sampled its last coefficient–was only ever taken once in dozens of calls to this hot loop. Because of the necessarily unpredictable nature of rejection sampling, we couldn’t eliminate this check entirely, but we found that in some cases it could be elided.

Indeed, to reduce the impact of Branch #1 in the above logic, we found that we could separate out the above loop into two cases: one where at least 20 coefficients were still needed and Branch #1 could be eliminated, and another where we need Branch #1 since fewer than 20 coefficients are needed (new logic in bold, skipped checks struck through):

- Subtract the first address past the output coefficient buffer from the write-out pointer

- Compare it to 64 bytes (= 2 accumulator write-outs, greater than 20 coefficients)

- If the carry bit is set, jump to (**) to skip unnecessary checks

- For 20 times:

- (Branch #1) If our write-out memory pointer is past the last valid address, jump to (*) to skip further sampling

- Fetch a 12-bit candidate coefficient

candby masking the least significant bits of the SHAKE-128 output - Compare

candto q = 3329 - …

- Return to rest of

poly_gen_matrix - (**) For 20 times:

(Branch #1) If our write-out memory pointer is past the last valid address, jump to (*) to skip further sampling- Fetch a 12-bit candidate coefficient

candby masking the least significant bits of the SHAKE-128 output - Compare

candto q = 3329 - …

- Return to rest of

poly_gen_matrix

Since skipping this check eliminates two cycles per iteration in a hot loop, it more than compensates for the small extra bit of logic to check whether 20+ coefficients are still needed.

This also led to a nice follow-on optimization, as now executing the latter loop implies that there are 20+ coefficients to generate. Since this is more than the 16 coefficients accumulator can hold, we can eagerly sample as many coefficients as there are open slots in accumulator without worrying about filling it. This means we can eliminate Branch #3 from this new eager step, only handling potential accumulator fills afterward.

- Subtract the first address past the output coefficient buffer from the write-out pointer

- Compare it to 64 bytes (= 2 accumulator write-outs, greater than 20 coefficients)

- If the carry bit is set, jump to (**) to skip unnecessary checks

- For 20 times:

- (Branch #1) If our write-out memory pointer is past the last valid address, jump to (*) to skip further sampling

- Fetch a 12-bit candidate coefficient

candby masking the least significant bits of the SHAKE-128 output - Compare

candto q = 3329 - …

- Return to rest of

poly_gen_matrix - (**) Subtract

accumulator_countfrom 20 to get the remaining number of slotsaccumulatorhas open: - For that number of times:

(Branch #1) If our write-out memory pointer is past the last valid address, jump to (*) to skip further sampling- Fetch a 12-bit candidate coefficient

candby masking the least significant bits of the SHAKE-128 output - Compare

candto q = 3329 - …

(Branch #3) Ifaccumulator_count< 16, jump to (*) to skip writing out to memoryWriteaccumulatorout to memory and increment the write-out memory pointerResetaccumulator_countto 0 to indicate that theaccumulatoris empty- …

- For the remaining number of times:

(Branch #1) If our write-out memory pointer is past the last valid address, jump to (*) to skip further sampling- Fetch a 12-bit candidate coefficient

candby masking the least significant bits of the SHAKE-128 output - Compare

candto q = 3329 - …

- Return to rest of

poly_gen_matrix

At this point, the vast majority of the stalls from Branches #1 and #3 have been eliminated. Branch #2 is a bit trickier though, as it represents the actual rejection sampling branch, which is intrinsically necessary as a conditional piece of logic. For this, we actually move the conditional logic away from branching instead using the bn.sel instruction to select between two versions of accumulator depending on whether a candidate should be accepted.

In detail, recall that Branch #2 when taken skips several steps, including:

- Shifting the candidate into

accumulator - Updating the

accumulator_count - Checking if

accumulator_countindicatesaccumulatoris full 3a. If so, storing the contents ofaccumulatorto memory and resettingaccumulator_count

To handle (1), we simply replace shifting the candidate into accumulator with shifting it into a separate wide register, selecting between the contents of new register and the old one using the carry flag in a single instruction:

/* Shift the candidate into `accumulator`, storing the result in `accumulator_new` wide register. */

bn.rshi accumulator_new, cand, accumulator >> 16

/* Select between the previous `accumulator` and `accumulator_new` based on the carry flag. */

bn.sel accumulator, accumulator_new, accumulator, FG0.CFor (2), we can craftily make use of the fact that the carry flag is the least significant bit of the flags CSR, so we can mask it and add it to the accumulator_count

csrrs a4, 0x7C0, zero /* Read flags */

andi a4, a4, 1 /* Mask carry flag to detect underflow */

add accumulator_count, accumulator_count, a4 /* Move to next slot iff not rejected */(The perhaps overly astute reader will note that this add is actually a sub in the most recent code; this is because accumulator_count was swapped to count the number of remaining slots to allow a minor performance improvement.)

Item (3) is actually just the Branch #3 that we eliminated from the eager sampling loop. It needs to remain in the following loop that handles accumulator rejection, but it’s fine (and performance-wise advantageous) to run this check superfluously even if a candidate wasn’t accepted this iteration. Note that we do occasionally end up triggering a second write-out of the accumulator to memory, but that in every such case the written value is exactly what was already present, maintaining correctness of the rejection sampling loop.

With these last small tweaks to eliminate Branch #2, we have completely eliminated 30-40% of the overall processor stalls, reducing ML-KEM decapsulation cycle counts by about 20% across all parameter sets. Note that the eager sampling loop we added is now entirely branchless, despite the fact that we couldn’t apply the same trick as ML-DSA. The updated implementation of _poly_uniform_inner_loop can be found here.

The glorious rebase

After all of the changes above were merged into the development repository, we felt that the hardware and software implementation was mature enough to integrate into ZeroRISC’s primary repository. While our testing set up let us know our software tests for ACC were passing, that was about it. At the end of the day though, how much work could it really be to upstream this? As it turns out, quite a bit, and certainly emphasises the importance of a high quality testing framework.

Despite the development repository sharing a common commit history with our upstream, it hadn’t been synced for roughly six months. We had contributed nearly 200 commits between KMAC, ACC, and SW over that period, while the upstream was accumulating commits at a much more rapid pace. Thankfully, the vast majority of commits did not have conflicts, as development was reasonably independent. One wrinkle: during the development period, a commit was merged upstream that changed the endian representation of crypto tests for ACC supported algorithms, while we had been writing new crypto tests for ML-KEM and ML-DSA based on the format used at the time of the development repository’s creation. After resolving this merge conflict, which impacted a large number of the now 300 crypto tests, we were inching closer to an upstream merge.

The remaining problem? A very large wall of red lint marks. Through our development we either modified or created 237 files, a mixture of SystemVerilog, Python, Assembly, and a few other formats on which we performed extensive lint checks for. Every time we fixed one lint check, it would free up the next one to run, leading to an iterative cycle. Finally we had all green lint checkmarks, but now a failing FPGA test. The FPGA tests synthesize the entire top-level design and load software tests to execute and verify IP blocks and that our cryptographic functions are working properly with KAT test vectors. As it turns out, we had created a small bug in the development process, which our minimal research testing framework did not catch. Nevertheless, one more commit and we were finally done capstoning a multi-year, multi-party multi-publication research collaboration that resulted in an open-source, state-of-the-art Asymmetric Cryptographic Co-processor.

This merge isn’t the end of our refinement work; far from it. We have more ideas for how to improve the code size metrics and are working with our research collaborators on masking techniques and even potential additional ISA tweaks. Stay tuned for more, including the upcoming joint talk at Real World Crypto in Taipei!

Interested in learning more? Sign up for ZeroRISC’s early-access program or contact us at info@zerorisc.com.

Some quick notes on graph methodology: The latency metrics were from running 10 identical pseudorandom tests per parameter set and operation on each version at commits 1afe29860e, 3b6febaadc, aa7b8153c1 dbd3341285, and 37250106b5. We took the average of the 10 tests for each operation, then added the averages for ML-DSA and ML-KEM respectively and normalized to the latest version. The baseline numbers were back-solved by applying the speedups from the Towards ML-KEM and ML-DSA on OpenTitan paper, since that code was never in our repository. Although 10 tests is not enough for statistical significance on the absolute number of cycles, particularly for ML-DSA signing, it’s good enough for a sense of the relative difference between two versions if the tests are identical. The logic area and frequency numbers are from the ASIC Genus 7nm counts in the paper Improving ML-KEM and ML-DSA on OpenTitan. The memory area assumes 32KiB of instruction memory in all cases and rounds up to the next power of 2 for data memory (e.g. if ML-DSA signing takes 20KiB and everything else fits in 16KiB, we still round to 32KiB).↩︎

Towards ML-KEM & ML-DSA on OpenTitan. Amin Abdulrahman, Felix Oberhansl, Hoang Nguyen Hien Pham, Jade Philipoom, Peter Schwabe, Tobias Stelzer, Andreas Zankl. IEEE S&P 2025. https://eprint.iacr.org/2024/1192↩︎

Improving ML-KEM and ML-DSA on OpenTitan: Efficient Multiplication Vector Instructions for OTBN. Ruben Niederhagen, Hoang Nguyen Hien Pham. CHES 2026. https://eprint.iacr.org/2025/2028↩︎

Dilithium for Memory Constrained Devices. Joppe W. Bos, Joost Renes, Amber Sprenkels. AfricaCrypt 2022. https://eprint.iacr.org/2022/323↩︎

Compact Dilithium Implementations on Cortex-M3 and Cortex-M4. Denisa O. C. Greconici, Matthias J. Kannwischer, Amber Sprenkels. https://eprint.iacr.org/2020/1278↩︎

Warp Drive Engineering: Implementing and Optimizing the Dilithium Signature Scheme. Amber Sprenkels, PhD thesis. 2024. https://electricdusk.com/files/2024-11-03_thesis.pdf↩︎

The slowdown for ML-DSA-87 does not match the table exactly because the table slowdown approximations were calculated based on single tests, at the time the memory optimizations were developed. Since this work overlapped with improvements to the hardware multiplier and adder, we later reapplied the memory optimizations on top of that work. Because we developed benchmarking infrastructure later on top of that new state, it was easier to get comparison numbers for “improving” vs “improving with memory optimizations” than to get the comparison for the original memory optimization commits. But of course, since the new multiplier and adder changed the performance characteristics of the implementation, the slowdown from memory optimizations also shifted a bit.↩︎